Contributing Editor David Hand, Imperial College London, examines some of the pitfalls of administrative data (information collected primarily for business or organizational, not research, purposes):

A great deal of statistical analysis is aimed at making inferences from a sample to a population. This might be with a view to predicting the value of some characteristics for previously unseen population members, for predicting future values in a series of observations, for understanding mechanisms and processes which generate the data, or for other reasons. Statistical theory, based on a sound understanding of probability developed over the past three hundred years, has given us very powerful tools for such inferences. Increasingly, however, we find ourselves faced with a rather different data paradigm. Instead of sampled data, we now find ourselves presented with what is claimed to be all of the data.

For example, supermarkets do not record just some of the transactions made; details of all of them are entered in the database. Tax offices retain all of their records, not just a random sample. Schools do not test just some of their students, but all of them.

Data of this kind have been termed administrative data (Hand, 2018). They are data collected during the course of some administrative exercise, not primarily with a view to statistical analysis, but simply to run an operation—a supermarket, a tax system, or an education system in the examples above. But once the data have been collected, the extra cost of retaining them in a database is negligible. The data represent a sort of exhaust from the process, and can be immensely valuable for shedding light on the organisation and operation that is generating the data.

This potential has stimulated a burst of enthusiasm for the analysis of such data. In fact, it is often claimed that such data have significant advantages over traditional sampled data.

The first claimed advantage is the negligible cost of data acquisition compared with, say, conducting a survey, since the cost is already mainly borne through the administrative exercise which is driving the measurement. That is true, but effort—and hence cost—will be needed to quality assure and perhaps clean the data, as well as link them to other relevant material. Furthermore, the cost of collating a supermarket’s transaction data might be negligible to that supermarket, but you try asking for the data and see if the cost is negligible to you.

Secondly, as we have already noted, for very good reasons we might expect all of the data to be there. It is true that all of a particular supermarket’s transactions can be retained in its database. But there is more than one supermarket. Different supermarkets often tend to target slightly different population segments, so that their data sets differ in subtle ways. If your aim is to describe the past customers of the organisation for which you have data, that’s fine, but if you hope to go beyond that then various imponderables arise.

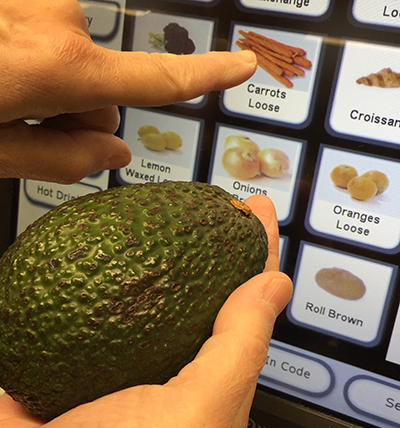

Thirdly, one might justifiably expect the data to be of high quality, since otherwise the organisation is likely to go bust. A supermarket which incorrectly charges for its goods may not last long. It’s true that there is no sampling variability in the data, but there will be all sorts of other issues. Product switching with new electronic self-checkouts provides an example. Here people have been seen to enter other vegetables as carrots, since carrots are typically the cheapest vegetables sold, far cheaper than items like avocados, for example. One supermarket even appeared to have sold more carrots than it had in stock.

“An avocado in the hand is worth several carrots at the self-checkout,” as the saying nearly goes… Switching more valuable products (like avocados) for less valuable ones (e.g., carrots) skews the administrative data collected by supermarkets.

There’s also a more subtle (but arguably at least as important) aspect to data quality. This is that the data might not be ideal for answering your particular research question. After all, they were collected to run the organisation, not primarily for later analysis to shed light on the organisation. The definition a tax office uses for “employed” may not match the definition a social scientist would like to use.

Fourthly, and this is one of the keys of the “big data” revolution, at least in commerce and government the data will be as up-to-date as it is possible to be. Supermarket transactions enter the database essentially as soon as they are made. Contrast this with the delay of months which may arise if the data are collected by a survey. Once again, however, while the data might be instantly available to the supermarket, it is unlikely to be so readily available to external analysts.

Fifthly, administrative data tell us how people really are behaving, not how they say they behave. This suggests you cannot get any closer to social reality than with administrative data. Which is fine if that social reality is what you really want to study. Carrot misrepresentation may be social reality, but it is really a minor aspect requiring resolution as far as the macroeconomics of the supermarket are concerned.

Finally, it is claimed, administrative data necessarily provide more precise definitions than alternative sources: you know precisely the make of the vodka being sold. But the fact is that sometimes simplifications are necessary. Government trade statistics group different goods into larger classes, inevitably glossing over subtle distinctions. Credit card transactions do not record at the micro-level of the precise nature of the goods being bought.

There is no doubt that, in the face of globally declining survey response rates, new strategies for data collection have great attractions. In many ways, these alternative sources of data have properties complementary to more traditional sources. And that, of course, tells us the way forward. Combining, linking, and merging data from different kinds of sources is likely to yield more accurate and more insightful perspectives on the systems we are trying to understand.

—

Reference:

Hand, D.J. (2018) Statistical challenges of administrative and transaction data (with discussion). Journal of the Royal Statistical Society, Series A, 181, 555–605.

Comments on “Hand writing: Administrative Data”